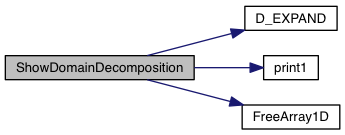

Show the parallel domain decomposition by having each processor print its own computational domain. This is activated with the -show-dec command line argument. It may be long for several thousand processors.

307 int ib, ie, jb, je, kb, ke;

309 int nghx, nghy, nghz;

311 static int *ib_proc, *ie_proc;

312 static int *jb_proc, *je_proc;

313 static int *kb_proc, *ke_proc;

315 double xb, xe, yb, ye, zb, ze;

317 double *xb_proc, *xe_proc;

318 double *yb_proc, *ye_proc;

319 double *zb_proc, *ze_proc;

349 xb = Gx->

xl[ib]; xe = Gx->

xr[ie];

350 yb = Gy->

xl[jb]; ye = Gy->

xr[je];

351 zb = Gz->

xl[kb]; ze = Gz->

xr[ke];

354 MPI_Gather (&xb, 1, MPI_DOUBLE, xb_proc, 1, MPI_DOUBLE, 0, MPI_COMM_WORLD);

355 MPI_Gather (&xe, 1, MPI_DOUBLE, xe_proc, 1, MPI_DOUBLE, 0, MPI_COMM_WORLD); ,

357 MPI_Gather (&yb, 1, MPI_DOUBLE, yb_proc, 1, MPI_DOUBLE, 0, MPI_COMM_WORLD);

358 MPI_Gather (&ye, 1, MPI_DOUBLE, ye_proc, 1, MPI_DOUBLE, 0, MPI_COMM_WORLD); ,

360 MPI_Gather (&zb, 1, MPI_DOUBLE, zb_proc, 1, MPI_DOUBLE, 0, MPI_COMM_WORLD);

361 MPI_Gather (&ze, 1, MPI_DOUBLE, ze_proc, 1, MPI_DOUBLE, 0, MPI_COMM_WORLD);

366 ib = Gx->

beg; ie += Gx->

beg - nghx;

367 jb = Gy->

beg; je += Gy->

beg - nghy;

368 kb = Gz->

beg; ke += Gz->

beg - nghz;

371 MPI_Gather (&ib, 1, MPI_INT, ib_proc, 1, MPI_INT, 0, MPI_COMM_WORLD);

372 MPI_Gather (&ie, 1, MPI_INT, ie_proc, 1, MPI_INT, 0, MPI_COMM_WORLD); ,

374 MPI_Gather (&jb, 1, MPI_INT, jb_proc, 1, MPI_INT, 0, MPI_COMM_WORLD);

375 MPI_Gather (&je, 1, MPI_INT, je_proc, 1, MPI_INT, 0, MPI_COMM_WORLD); ,

377 MPI_Gather (&kb, 1, MPI_INT, kb_proc, 1, MPI_INT, 0, MPI_COMM_WORLD);

378 MPI_Gather (&ke, 1, MPI_INT, ke_proc, 1, MPI_INT, 0, MPI_COMM_WORLD);

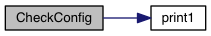

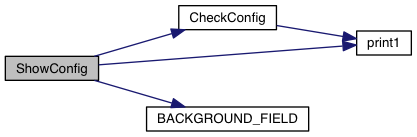

381 print1 (

"> Domain Decomposition (%d procs):\n\n", nprocs);

383 for (p = 0; p < nprocs; p++){

385 print1 (

" - Proc # %d, X1: [%f, %f], i: [%d, %d], NX1: %d\n",

386 p, xb_proc[p], xe_proc[p],

387 ib_proc[p], ie_proc[p],

388 ie_proc[p]-ib_proc[p]+1); ,

389 print1 (

" X2: [%f, %f], j: [%d, %d]; NX2: %d\n",

390 yb_proc[p], ye_proc[p],

391 jb_proc[p], je_proc[p],

392 je_proc[p]-jb_proc[p]+1); ,

393 print1 (

" X3: [%f, %f], k: [%d, %d], NX3: %d\n\n",

394 zb_proc[p], ze_proc[p],

395 kb_proc[p], ke_proc[p],

396 ke_proc[p]-kb_proc[p]+1);

400 MPI_Barrier (MPI_COMM_WORLD);

void FreeArray1D(void *v)

void print1(const char *fmt,...)

int beg

Global start index for the local array.

int nghost

Number of ghost zones.

#define ARRAY_1D(nx, type)

D_EXPAND(tot/[n]=(double) grid[IDIR].np_int_glob;, tot/[n]=(double) grid[JDIR].np_int_glob;, tot/[n]=(double) grid[KDIR].np_int_glob;)

int np_tot

Total number of points in the local domain (boundaries included).

int np_int

Total number of points in the local domain (boundaries excluded).